For the last two years, Bing has ranked many of my syndication feeds ("RSS") higher than the webpage counterparts. Syndication feeds are machine-readable documents people use to get blog updates with a special program. I’ve racked my brain trying to figure out why Bing does this, and I’ve finally found an answer.

For example, Bing puts the search engine topic feed on the first page of search results, but the corresponding topic webpage is ranked at the bottom of page six. Yes, the two documents are technically duplicate content; which search engines hate. However, one is a webpage formatted for humans, and the other was a machine-readable XML document. (I’ve since added a presentational stylesheet to make the feed page appear as a webpage, but more on that later.)

This isn’t a problem unique to this website either. I’ve surveyed 80 of the blogs that I subscribe to in my feed reader. I searched for the name of the blog and the author after first verifying that these are keywords found both on the webpage and their feeds. Bing listed the feed above or immediately following the front page for a little over half the searches. So, what did these websites have in common with my website?

The thing we all have in common is that our feeds are published under a different domain than the main website. Perhaps Bing seems to think that they’re two different websites? Both the feed and webpage representations link to each other and indicate that they’re different representations of each other. I didn’t find any examples of feeds published from the same domain showing up in search results, however.

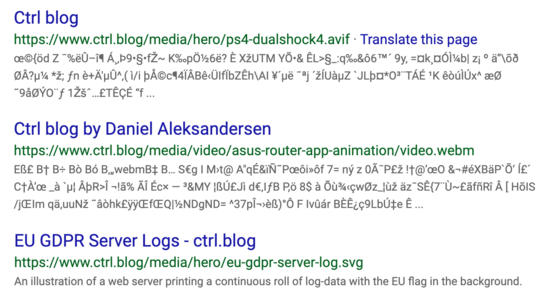

The issue isn’t limited to just feed files, though. Bing also includes other binary files in formats it doesn’t understand in its search results. Here’s a selection of binary or media asset type files that Bing includes in search results for my blog’s domain:

Bing search results include human-unreadable binary files.

The SVG image file is easy to account for: it uses the metadata found in the actual image file. The other two results hint at some metadata misattribution? None of these things belong in general web search results, though. Two are images and belong in image search, and the last is a video file. What they have in common is that Bing includes them in results for keywords for the articles they belong to.

I’ve asked Bing Webmaster Support several times about the unwanted file assets in their search results. I expected the underlying issue to be similar to the feed issue. Bing should know these files aren’t documents and that they don’t belong in regular search results. Bing’s support staff never understand what I’m asking about, and end up in a loop where they ask me to resubmit the same information over and over until I go away.

I don’t want to exclude these files from indexing, however. I’d still want to see my images included in Bing Image search, for example.

I don’t know how to solve this last problem with media assets yet. However, I did find a solution for the feed problem that has been plaguing my site for so long. It’s kind of obvious, but I didn’t realize that the solution applied to syndication feeds until I wrote about FeedBurner back in .

The core problem with the feeds is that the pages are duplicated content. I also think I’ve realized why Bing preferred the unstyled feeds over my beautifully crafted webpages. It comes down to file sizes. Bing Webmaster Central flagged some of my pages as being overweight. There were just too many kilobytes on each topic page. The biggest pages listed 80 articles complete with a lot of markup for various image representations and metadata. I’ll get back to this problem towards the end of the article.

Bing somehow failed to realize that the feed documents weren’t intended for human consumption, though. First, I fixed the problem of the feed pages not being human-readable. I included XSLT instructions in the feed which web browsers can transform the feed XML into a webpage. This let me craft a preview page for the feed, so visitors who stumbled upon it would actually be able to make some sense of it. Visitors who Bing misled onto these pages would no longer have a terrible experience!

Then it struck me: how do web authors normally deal with duplicate pages? You guessed it: using <link rel="canonical"/>, of course! Link canonicalization is an industry-standard for indicating to search engines which is the preferred URL of a page. I’d already included canonical links on all my webpages, but I’d not included them in the feed documents!

I quickly added canonical links on each of my feed pages linking to their equivalent webpage. Thus informing Bing and other search engines — none of which had the same problem as Bing, though — that the webpage was the preferred canonical representation for this document. Two weeks later, almost all of the unwanted feed page search results are gone from search results on Bing!

My current content duplication content may have been part of the underlying cause of my Bing delisting back in . I narrowed the problem down to something relating to link canonicalization and duplicated content. I’ve never thought of the syndication feeds as duplicate content until I reframed the problem and started thinking of them as webpages. Other search engines seem to be smart enough to discard those, after all.

If you have the same problem, then you can apply this trick using a Link HTTP response header instead of implementing XSLT for your feeds. This should be quicker and way easier to implement.

After confirming that Bing now ranks my webpages over their feeds, I began working on my page weight problem. It was long overdue that I broke my topic pages up into separate pages. It makes it more difficult to go to a topic page and do an in-page search for a keyword. I doubt anyone but myself regularly used that as a navigation method, however.

The underlying problem is probably that the syndication feeds and image assets are widely distributed around the web. Especially the syndication feeds are made to be shared and distributed. This means there are unusually many incoming links to them; signals that Bing probably reflected in its search results. Bing should know this isn’t the preferred representation format, though. I haven’t encountered any other search engine that has the same problem with telling machine- and human-readable documents apart.