Hotlink protection is the practice of serving different images based on the HTTP Referer (sic) header. In other words, serving one image when the image is requested from a page on your website and then serve a different image when it’s served from a page on another website. When websites include images from other websites without permissions, this is known as “hotlinking”.

Early-web hotlink protection measures were crude and not very sexy when seen with a modern eye. Yet its deployed by many websites because bandwidth still isn’t free and people who don’t know any better or just doesn’t care about free-loading the bandwidth of other websites continue to hotlink images.

The old way

The traditional hotlinking protection method consist of looking at the HTTP Referer (sic) request header for images and return a completely different image when the request originated from an unexpected domain. The returned image is often a sarcastic remark or verbal attack of the website that hotlinked the image. Google Image Search has some varied specimens.

This method is deployed on tens of thousands of domains and have been recommended and echoed on blog after blog over the last decade and a half.

This solves the immediate problem of having other websites and especially blogs and forums from hotlinking images. It’s rather effective as publisher quickly realizes that the image they thought they were including didn’t turn out to be what they expected.

It does, however, also negatively reflect on your website as you’re making an issue out of something that — to the average person on the web — is none of their concern, wasn’t ever a problem for them, and then you came along and made it a problem.

Another problem is that the web has changed a lot since this hotlinking technique was initially deployed. Bandwidth has become much cheaper and there are hundreds of social link collectives, news sites, and other networks; plus hundreds of hosted and self-hosted syndication feed readers, webmail clients, and machine-translation services.

To put it simply, there are a lot of places where people now expect hotlinking to work. It can hurt traffic growth opportunities if you return a passive aggressive or outright aggressive warning instead of an image representing your product, article, photo, or other creative works or products.

An alternate technique involves returning a HTTP 204 No Content status code and immediately dropping the connection. This most certainly significantly reduces the bandwidth costs associated with hotlinked images. Outright blocking hotlinked images has the drawback of potentially making your website appear to be broken.

Embedding/inline-loading is much more common now and it’s considered legitimate in many more contexts because of popular web reading list services, feed readers, social news sites, web mail, aggregates, etc. Maintaining an allow list of the services you approve of individually is impractical and would have to be constantly fine-tuned.

You definitively don’t want to shout at potential visitors who’re coming to your website from their webmail or social network sites about them stealing bandwidth. Yet this is exactly what many websites do.

As hotlinking can’t be prevented in any meaningful way, I like to focus on reducing it’s impact on my server and bandwidth instead. A hotlinked image will be siphoning server resources no matter what you do, so let us rather make the best of the situation.

Modernizing an old technique

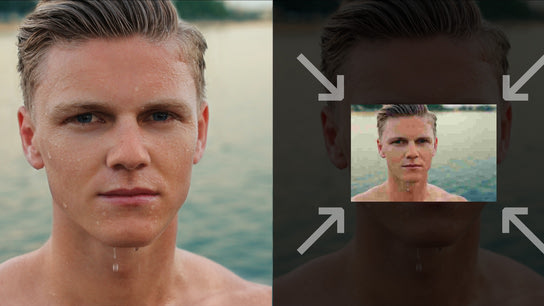

My technique is based on the same HTTP Referer (sic) technique as before, but instead of returning a completely different image with a warning – visitors on external websites get served a reduced quality variant of the original image.

This happens through an on-demand image processor that will intercept image requests when their referrer information doesn’t match that of my website. When the image processor is called upon, it will significantly reduce the dimensions and quality of the source image; thus reducing it’s demand on bandwidth.

While the image will remain identifiable and be easier on your bandwidth bill, it might reflect badly on the external website as they’ll suddenly have lower quality imagery on their site. If the external site is kind enough to link back to your website, the visitor would see the full image size. Loyal visitors on your site that have already cached the image would also still see the full-quality image on the external site.

A high-resolution 1000x600 image at JPEG quality 82 (WordPress default) can be compressed down to 300x180 at JPEG quality 25 and save as much as 96 % of the bandwidth demand per request. This does, of course, depend on the complexity of the image and other factors, but you’re looking at a hefty bandwidth saving while still having a mostly distinguishable image.

You should still allow for some exceptions, however. Images used in the OpenGraph Protocol and images in web syndication feeds should be excluded from bandwidth protection measures. This can easily be achieved by tagging them as being hosted in a different location or by including a special token in their URLs (such as ?is=ogp). Image requests referred through machine-translation services should also be excluded. I’ll get back to both of these later.

The last exception is that every website should be given a chance to cache your full quality image once before lowering the quality. OpenGraph consumers like Facebook, Google+, Twitter, and others will all try to cache images and serve them from their own servers. This is fine and frankly how the internet should work.

With that overly long introduction to the concepts of this more permissive – and dare I say modern — image protection technique out of the way; let us look at how it can be implemented.

Implementing the technique

I’ll go through the technicalities of this technique under a Linux+Apache+PHP server setup. I’ll be explaining every step and change along the way so you can adopt the method to other software stacks if need be.

Server requirements: Apache web server 2.4 or newer, PHP 5 or newer, the PHP GD graphics module (install and enable the php-gd package from your Linux distribution’s repository). Instructions for Security-Enhanced Linux (SELinux) is provided, but can be disregarded for systems where this isn’t enabled.

The five next sub-headings will implement the five different components of the previously described hotlinking protection technique.

Note that underlined variables in the code examples below must be changed in multiple locations if you modify them.

The hotlinked image cache

Constantly shrinking and compressing the same image would put an unnecessary strain on server resources. Every hotlinked image should thus be cached for some time so that subsequent requests can be served from the cache rather than reprocessed for every request.

Start by creating a folder for the image cache, and assign it to the apache user:

mkdir -m 755 "/var/www/cache/hotlinked-assets/"

chown apache:apache "/var/www/cache/hotlinked-assets/"For a system protected by SELinux, you’ll need to set a label like ‘read-writable web content type’ on the caching folder to allow for image files to be created and read from the cache directory:

semanage fcontext -a -t httpd_sys_rw_content_t "/var/www/cache/hotlinked-assets(/.*)?"

restorecon -R -v -F "/var/www/cache/"We’ll get back to how to prevent the image cache from consuming an enormous amount of disk space by cleaning out the files that aren’t getting requested a little later on.

With the cache in place, the next step is to set up the image processor that will receive requests for images, reduce them in size, and serve them back to the user.

On-demand image processor

This is a simplified version of what I use for this website. It should bee fully functional, however. I’m not going to explain the code itself in too great a detail, but the main logic of it’s as follows:

- Determine the path to the image file on disk from the request path coming in from the server. Verify that the file exists.

- If the file doesn’t exist, return a placeholder image.

- If the file does exist:

- Combine an unique hash of the path to the file name with the remote origin (domain name) that requested the image. This allows for per-origin processing.

- Check to see if the combination of hash and origin is already in the image cache, and return the image from the cache if it exists.

- If it’s not in the cache:

- Shrink and compress the image to the given quality parameters. Save the resulting image to the cache.

- Return the original image as this is the first request for the image from the given referring host.

I’ll leave the obvious optimization of not reprocessing the same hash (image path) multiple times up to the reader as an exercise.

Create a file called /assets/hotlinker.php (relative to your DocumentRoot) and include the following script:

<?php

# SPDX-License-Identifier: CC0-1.0

function request_uri_to_path($img_address) {

if (strpos($img_address, '..') !== false || strpos($img_address, '"') !== false || strpos($img_address, "'") !== false || strpos($img_address, '`') !== false) {

return false;

}

$file_path = "/var/www/html${img_address}";

if (file_exists($file_path)) {

return $file_path;

}

return false;

}

function get_hotlinkable_image( $img_address ) {

$img_path = request_uri_to_path(strtok($img_address, '?'));

if ($img_path === false) {

return false;

}

$referer_hostname = preg_replace("/[^a-z0-9]/", '_', parse_url($_SERVER['HTTP_REFERER'], PHP_URL_HOST));

$img_hash = md5($img_path, false);

$file_path = "/var/www/cache/hotlinked-assets/${img_hash}-${referer_hostname}.jpeg";

if (file_exists($file_path)) {

return $file_path;

}

# Maximum dimensions

$width = 550;

$height = 270;

# Calculate new image ratio

list($width_orig, $height_orig) = getimagesize($img_path);

$ratio_orig = $width_orig / $height_orig;

if ($width / $height > $ratio_orig) {

$width = $height * $ratio_orig;

} else {

$height = $width / $ratio_orig;

}

# Read in the original image and compress it

$image_p = imagecreatetruecolor($width, $height);

$image = imagecreatefromstring(file_get_contents($img_path));

imagecopyresampled($image_p, $image, 0, 0, 0, 0, $width, $height, $width_orig, $height_orig);

imagedestroy($image);

# Write out new cached image

imagejpeg($image_p, $file_path, 30);

imagedestroy($image_p);

return $img_path;

}

# Serve an image

$image = get_hotlinkable_image($_SERVER['REQUEST_URI']);

if ($image === false || !isset($image)) {

$image = '/var/www/html/assets/img/fallback-hotlinking-warning.png';

}

$fp = fopen($image, 'rb');

header('Vary: Referer');

header('Content-Type: image/jpeg');

header("Content-Length: " . filesize($image));

fpassthru($fp);This code has obviously been minimized for the purpose of demonstrating how to implement the above logic. It will, however, perform it’s job adequately.

The next step is to start diverting requests coming from external domains via the new hotlink request image processor that we just setup.

Rewriting externally referenced images in Apache

Include the below Apache configuration chunk in your VirtualHost or Server block for the domain you want to deploy the protection mechanism on.

# Hotlink protection

RewriteEngine On

RewriteCond %{REQUEST_URI} ^/images/(.*).(gif|jpe?g|png|webp)$ [nocase]

RewriteCond %{HTTP_REFERER} !^$

RewriteCond %{HTTP_REFERER} !^https?://(www.)?example.com/.*$ [nocase]

RewriteCond %{HTTP_REFERER} !^https?://((translate|www).)?(baiducontent|fanyi.baidu|googleusercontent|microsofttranslator).com/.*$ [nocase] [OR]

RewriteCond %{QUERY_STRING} !is= [nocase]

RewriteRule ^(.*)$ /assets/hotlinker.php?$1 [last]The above consists of two sets of rewrite conditions. The first set starts by checking that the requested resource is in the /images/ path, and then checks to see that the referer (sic) header is either empty or matches your domain name.

The second set of domains checks against popular machine-translation services from the Baidu, Google, and Bing search engines. For the purposes of hotlink protection, these service’s domains should be considered to be synonymous with your domain.

Depending on your type of website and its contents, machine-translations will open up your websites to a much larger segment of the world’s 7.3 billion population. Note that these domains shouldn’t be considered as synonymous for other security related purposes.

You’ll realize why this last set of rules re important if you’ve ever come across an interesting blog post in a language you don’t understand – only to be met by aggressive accusations of hotlinking and bandwidth theft in the translated version of the page.

These translation services only swap out the text strings of your website with machine-translated variants in the visitor’s native language. Your ads and page are otherwise left unchanged.

The last part of the rewrite instruction is the rewrite rule. It says to pass any request for image resources that don’t meet the above criteria through the on-demand image processor that we set up before. Note that this address must be relative to the host root (the DocumentRoot) directory, and must not be an absolute URL.

Run the below command to perform an automatic test on your configuration before proceeding to spot any mistakes you may have made. If you’ve modified the regular expressions and run into problems, you might find Regex101 helpful in troubleshooting the problem.

apachectl configtestIf you didn’t run into any issues, then you should now be all set to start serving the reduced quality image on hotlinking sites. Restart Apache to apply your changes. Note that the “httpd” web server service may be called “apache” on some systems.

service httpd stop

service httpd startPeriodic clean-up

To make sure the cache stays lean, you should make sure to automatically clean up files when there’s no longer any interest in them.

Add a crontab to periodically delete generated images that haven’t been accessed in a more than two months. Run crontab -e and introduce the following weekly cleanup command:

@weekly nice -n 18 find "/var/www/cache/hotlinked-assets/" -name "*.jpeg" -atime 30 -deleteThis runs the find program through the image asset cache and cleans out files with no recorded access in the last 30 days. The command is then run once every week through the nice program to run it at a low system priority with minimal impact on the system.

You can reduce or increase the last-access time check, but you shouldn’t run this any less often than once a week. If you put off the task for too long, your image cache can grow quite large depending on the number of hotlinking hosts and images.

Website modifications

The only modification required to your website is for images that you wish to exempt from quality degradation. This could include the website icon or similar. You can exclude them by putting them in another folder than your primary image folder or using a query parameter tag.

The following example shows how you can append the exclusion parameter on images intended to be embedded on external websites via the OpenGraph Protocol (OGP), or a Twitter Summary Card:

<meta property="og:image" content="https://www.example.com/images/wet-man-300x300?is=ogp">

<meta name="twitter:image" content="https://www.example.com/images/wet-man-300x300.jpeg?is=twitter">It doesn’t matter what value or even if you include a value you give the parameter. It’s the fact that it’s set which makes it trigger the exception to the rewrite condition. Of course, this means that anyone can bypass your image hotlink protection system by appending the same parameter to any other image.

However, the likelihood that anyone would realize let alone do this is minuscule, and if it ever where to happen it would more likely be accidental than intentional.

You can take this a step further by counting and limiting how many times an image can be requested from the same external referral domain before you start degrading the image quality.

Apple News, Facebook, Feedly, Flipboard, Google+, Twitter, and other large platforms that consume OpenGraph Protocol data all deploy caching and serve your images from their own servers. An non-well-behaved OpenGraph data consumer would stand out by requesting the same image more than maybe two–five times per hour (or more likely days).

Other approaches?

My personal opinion is that hotlinking is a form of redistribution of a creative work. (How could anyone debate that fact?) Thus, it’s practice should be restricted under copyright law.

However, people’s understanding of copyright is very limited and people feel entirely entitled to not only steal creative works of others but also the server resources and bandwidth of other websites.

You may want to try asking the websites that hotlink your images to a) stop stealing your images, and b) at the very least stop stealing your bandwidth.

Unfortunately, it’s virtually impossible to get blog platforms like Blogger by Google, hosted blogs WordPress.com, and the web’s thousands of forum operators to take action against hotlinking by their members. Yet alone other websites.

There’s often both hard and time-consuming to even attempt to contact the person who performed the hotlinking. Getting that person to remove the link is a fool’s quest and you’ll more often than not just be ignored or met with absolutely no understanding.

Like when I encountered content scrapers stealing my articles a few months back, I believe the only effective countermeasure is a technical measure like the method outlined in this article.

Pixabay, a public domain photography gallery, has experimented with watermarking and attempts to hijack clicks from Google Image Search to corresponding pages on their website rather than allowing direct linking. As of today, it would seem that they only reduce image quality